How to use Twitter’s API to capture tweet data with Python.

What is an API?

API (Application Programming Interface) is a software interface that allows two applications to interact with each other without interaction with a user. An API provides a way for a developer to request services from an operating system or other application and expose data within different contexts and across multiple platforms.

How does an API Work?

An API communicates through a set of rules that define how applications and computers communicate with each other. In other words, an API acts as a middleman between any two machines that want to connect with each other for a specific task.

For example, suppose you want to incorporate a map of your location on your website. Google’s platform provides an API that allows authorized users to access and retrieve maps from their sites. When your website is launched, you are connected to the Google platform where you are authenticated, and a map is retrieved and sent to your website where it is displayed.

The Twitter API provides companies, developers, and users with programmatic access to Twitter’s vast amount of public data. Twitter defines its platform as “what’s happening in the world and what people are talking about right now.” Twitter currently has 396.5 million users. 206 million users access Twitter daily and 75% of them are not based in the United States.

If you would like a more detailed description of the Twitter API go to this link.

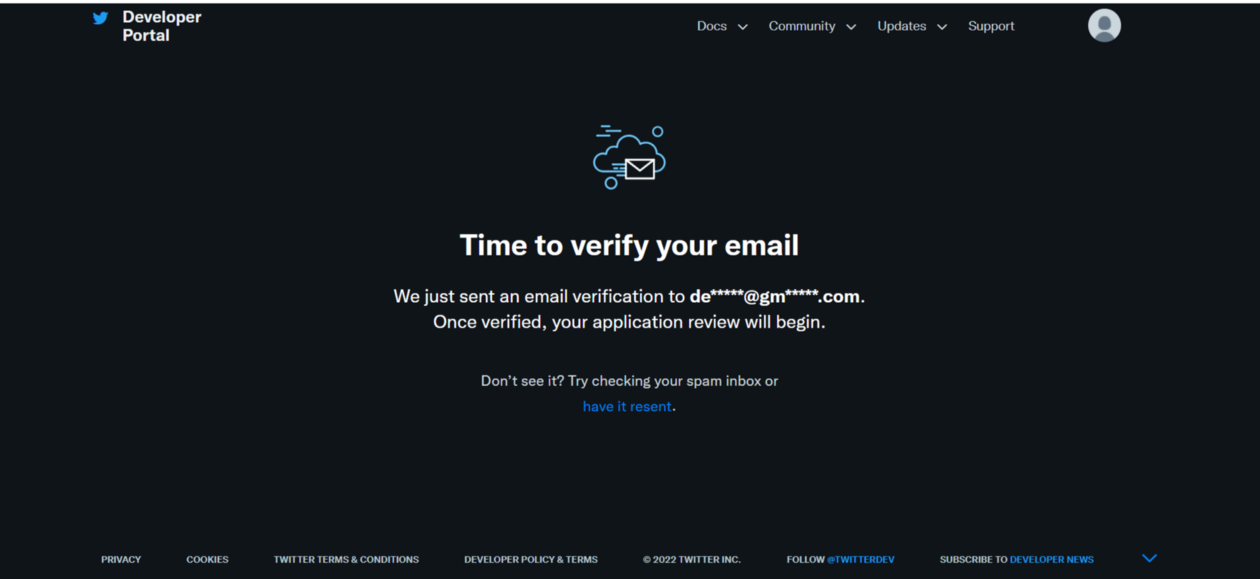

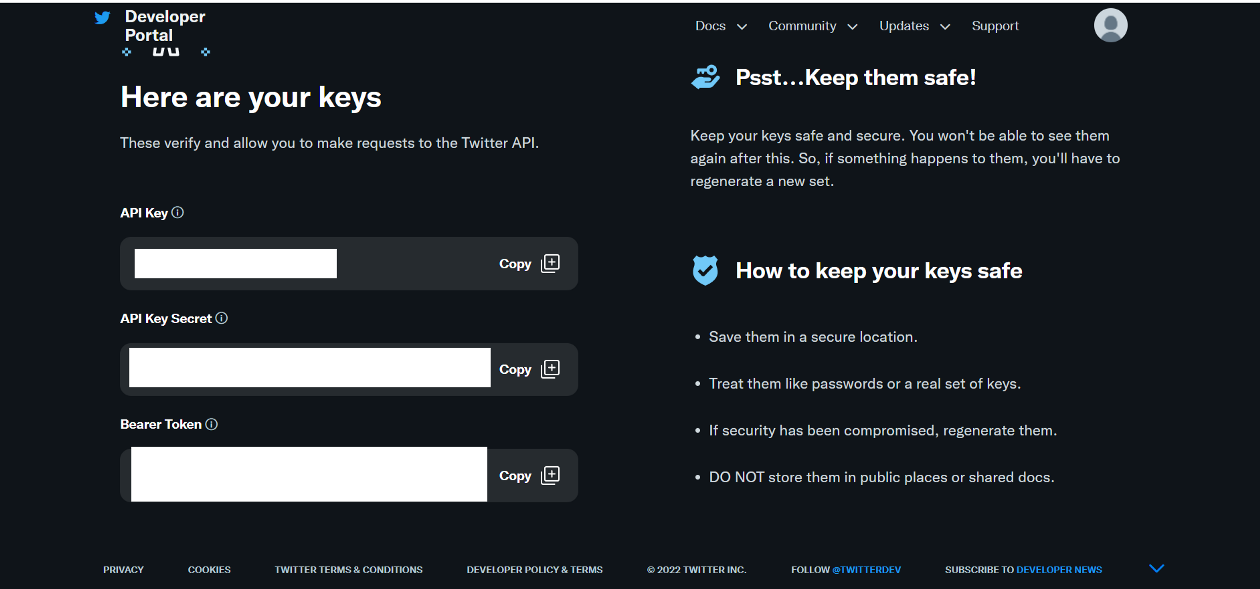

Setup a Twitter Developer Account

You are required to set up a Twitter developer account prior to using their API.

Listed below are the steps to set up a Twitter developer account.

1. Create a personal Twitter account. If you already have a Twitter account, you can skip this step.