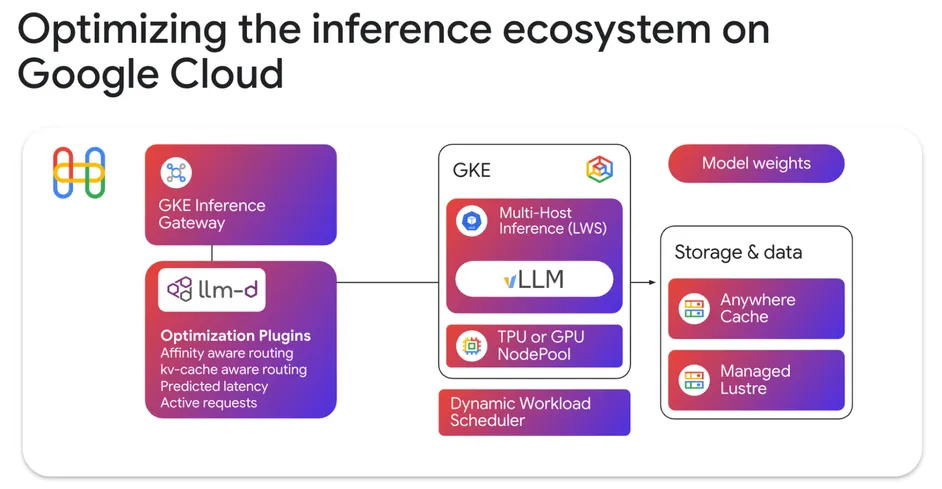

At Google Cloud, the llm-d project has been accepted as a Cloud Native Computing Foundation (CNCF) Sandbox project. This collaboration with industry leaders like Red Hat, IBM Research, CoreWeave, and NVIDIA aims to provide a framework for any model, accelerator, or cloud. The introduction of GKE Inference Gateway and Kubernetes LeaderWorkerSet (LWS) API, along with the integration of vLLM for Cloud TPUs, are enhancing the infrastructure for AI serving at scale.

Start writing about what excites you in tech — connect with developers, grow your voice, and get rewarded.

Join other developers and claim your FAUN.dev() account now!

Kaptain #Kubernetes

FAUN.dev()

@kaptainKubernetes Weekly Newsletter, Kaptain. Curated Kubernetes news, tutorials, tools and more!

Developer Influence

9

Influence

1

Total Hits

149

Posts