Introducing Ternary Bonsai: Top Intelligence at 1.58 Bits

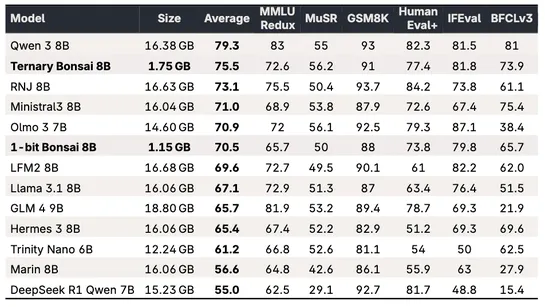

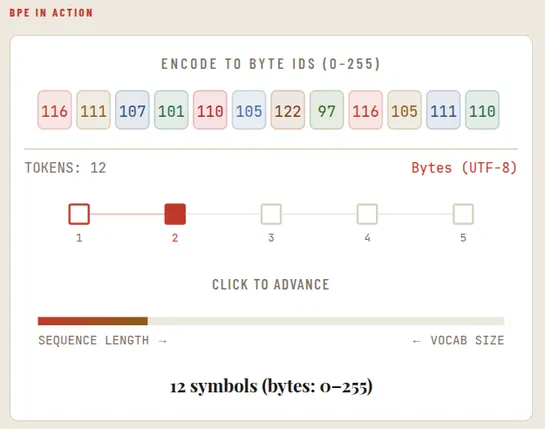

PrismML unveilsTernary Bonsai: a family of1.58-bitLMs in1.7B,4B, and8Bsizes. Models use ternary weights {-1,0,+1} with group-wise quantization. Weights are ternary (-1,0,+1). Each group of128weights shares anFP16scale. That cuts memory by ~9x versus 16-bit and boosts benchmark scores. The8Bhits 75.5.. read more