Fixing Ghost Drops: How eBPF Rescued IPv6 Telemetry

In this walkthrough, you use eBPF to patch malformed flow-export packets before the host network stack drops them... read more

In this walkthrough, you use eBPF to patch malformed flow-export packets before the host network stack drops them... read more

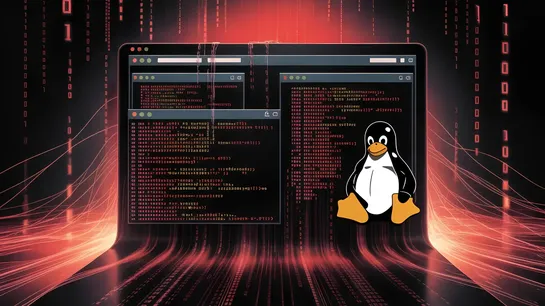

Kaspersky researchers explain how attackers use a compromised container to take over aKubernetescluster or host, with misconfigured APIs and permissions driving most escapes... read more

Qwen3.7-Plus is a powerful multimodal agent that seamlessly blends GUI and CLI interactions, excelling in coding, tool use, and productivity workflows. It generalizes across diverse agent frameworks, delivering competitive text performance and strong reasoning abilities across challenging STEM bench.. read more

This deep dive reveals a cutting-edge conversational analytics pipeline using Google Cloud and BigQuery to tackle multi-departmental data segmentation challenges with a hybrid semantic filtering approach. By pre-segmenting data and running targeted models, the pipeline uncovers granular insights oft.. read more

This article covers Python libraries that make large-scale data processing faster, more scalable, and easier to manage across modern data workflows... read more

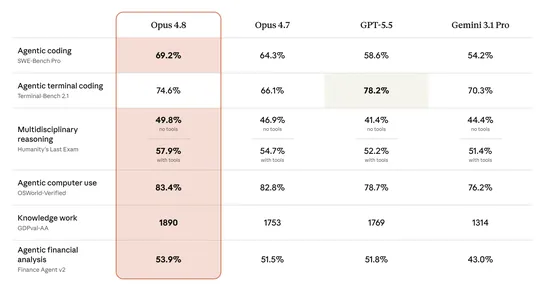

Claude Opus 4.8 delivers top-tier performance with honest and powerful collaboration, outpacing prior models and GPT-5.5 across multiple benchmarks. Opus 4.8's cutting-edge abilities and improved judgment set a new standard for enterprise AI, enhancing reliability and reasoning quality, ready for im.. read more

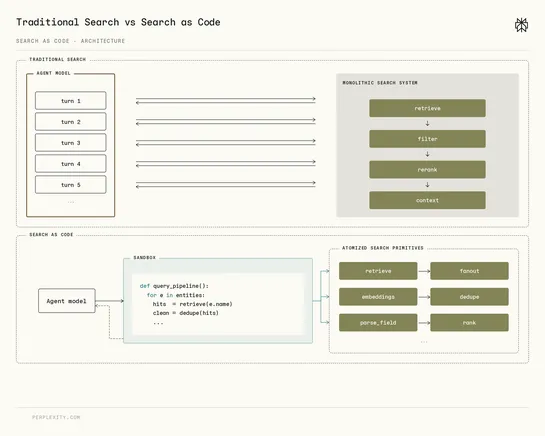

Perplexity's engineers introduced Search as Code, and developers use its Python SDK to call low-level retrieval primitives instead of sending queries to one search endpoint... read more

Intel designed Crescent Island, an AI inference GPU, with lower-cost memory and air cooling, and plans to ship limited quantities this year... read more

DevOps metrics show how fast & reliable your team delivers software; valuable for saving money & building trust.DORA metricsonly part of the picture. Focus on key categories to understand if overall delivery is improving. Don't just measure, find the bottleneck for real improvement... read more

A researcher disclosed CIFSwitch, a Linux local privilege escalation flaw present since 2007. Unprivileged users can exploit the CIFS Kerberos mount helper to gain root access... read more