Deploying FastMCP in Production

Gateway and proxy architectures

To expose MCP servers to the world, you can deploy MCP without an "MCP-native gateway", but large-scale production deployments often converge on one because:

Streamable HTTP can involve SSE streaming responses and resumability, which can break behind default buffering or timeout settings.

Session IDs (

MCP-Session-Id) require either sticky routing or shared session state.Enterprises typically need governance: catalogs, secrets management, RBAC, audit logging, and server lifecycle management.

These features are not impossible to build yourself with a generic reverse proxy and custom tooling, but MCP-native gateways try to reduce this operational burden by providing easier patterns for these common production needs.

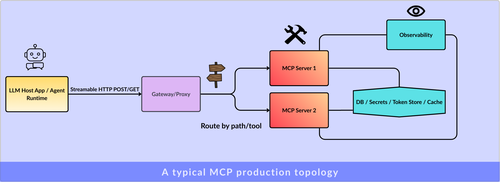

A typical production topology, with or without an MCP-native gateway, looks like this:

Typical MCP Production Topology

MCP-native gateways and proxies

Docker MCP Gateway:

Docker describes its MCP Gateway as a centralized entry point for MCP servers. It acts as a proxy and orchestrator.

Instead of running MCP servers directly on the host, it runs each server inside an isolated Docker container with restricted permissions. This improves security and separation between services.

The gateway handles:

- Starting and stopping MCP server containers.

- Routing client requests to the correct server.

- Injecting credentials securely (e.g.: passing secrets as environment variables when the container starts)

- Providing built-in logging and call tracing for visibility.

In short, it’s a control layer that manages MCP servers as containerized workloads, adding isolation, routing, and observability on top.

Microsoft MCP Gateway:

Microsoft’s MCP Gateway is designed mainly for Kubernetes environments. It acts as both a reverse proxy and a management layer for MCP servers.

It focuses on:

- Session-aware routing (so the same session goes to the same backend).

- Authorization and access control.

- Managing the lifecycle of MCP servers (deploy, update, delete).

- Telemetry and monitoring hooks.

In production mode, it runs as a stateless reverse proxy but uses a distributed session store. This means session data is stored outside the proxy, allowing scaling without losing session continuity.

It is deployed using Kubernetes-native patterns such as StatefulSets and headless services.

It's suitable for large enterprise environments running many MCP servers, where governance, session handling, and lifecycle management are more important than keeping the infrastructure minimal.

Obot:

Obot is an open-source platform for hosting and managing MCP servers. It provides a registry, a gateway layer, and monitoring for MCP usage.

It supports:

- OAuth 2.1 and secure token handling.

- Running MCP servers locally with Docker.

- Deploying MCP servers to Kubernetes.

- Both stdio-based servers and multi-user HTTP servers.

In practice, it is designed for organizations that want a centralized, approved catalog of MCP servers, along with governance, auditing, and access control.

MetaMCP:

MetaMCP operates as a proxy that aggregates multiple MCP servers into a unified MCP server, supports namespaces, and exposes endpoints over SSE or Streamable HTTP with auth options. This is a practical pattern when tool counts, per-team servers, or environment-variable secret handling become operational bottlenecks.

Generic reverse proxy: NGINX streaming-safe snippet

NGINX buffers responses by default. That means it waits to collect the full response before sending it to the client.

For streaming traffic like text/event-stream (SSE), this breaks the real-time effect. The client won’t see data as it’s produced.

So for MCP over Streamable HTTP, you usually need to disable buffering.

Conceptually:

location /mcp {

proxy_pass http://mcp_backend;

# Allow real streaming

proxy_buffering off;

proxy_cache off;

# Forward original client info

proxy_set_header Host $host;Practical MCP with FastMCP & LangChain

Engineering the Agentic ExperienceEnroll now to unlock current content and receive all future updates for free. Your purchase supports the author and fuels the creation of more exciting content. Act fast, as the price will rise as the course nears completion!