Agentic Coding is a Trap

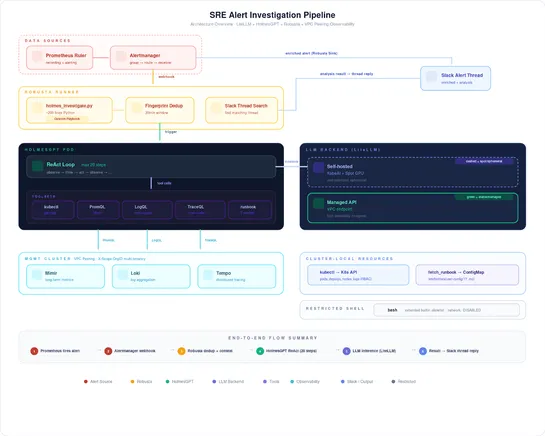

AI-driven coding agents are the hot new trend, but beware of the trade-offs: increased complexity, skills atrophy, vendor lock-in, and fluctuating costs. Only skilled developers can spot issues in the vast lines of generated code, but paradoxically, AI tools are impacting critical thinking skills ne.. read more