An open-weights Chinese model just beat Claude, GPT-5.5, and Gemini in a programming challenge

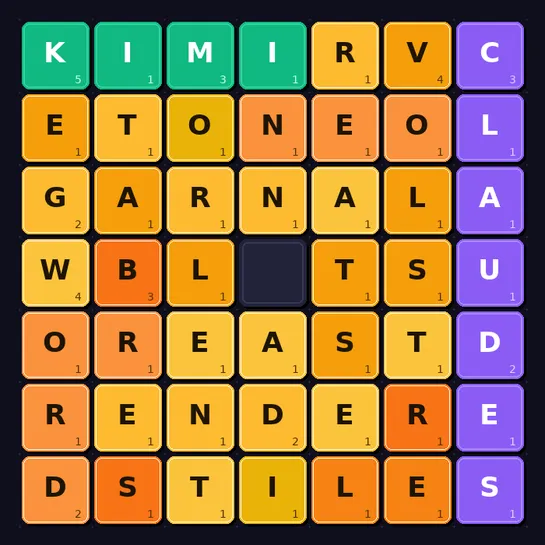

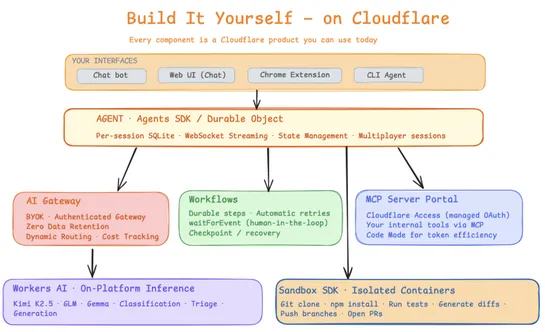

The AI Coding Contest Day 12 matched ten models on a sliding‑letter puzzle. Open‑weightsKimi K2.6took first: 22 match points (7‑1‑0).MiMo V2‑Proscored second by blasting claims for intact ≥7‑letter seeds (43 points).GPT‑5.5andClaude Opus 4.7landed third and fifth. Grids ran10×10→30×30. Heavy scrambl.. read more