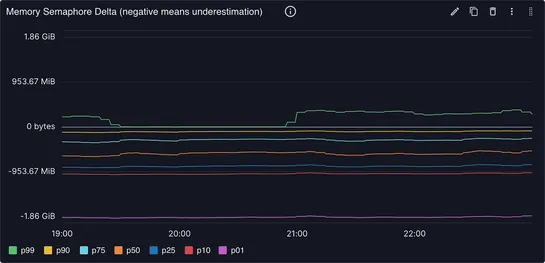

How We Reduced Median Memory Estimation Error by 99%, With the Help of AI

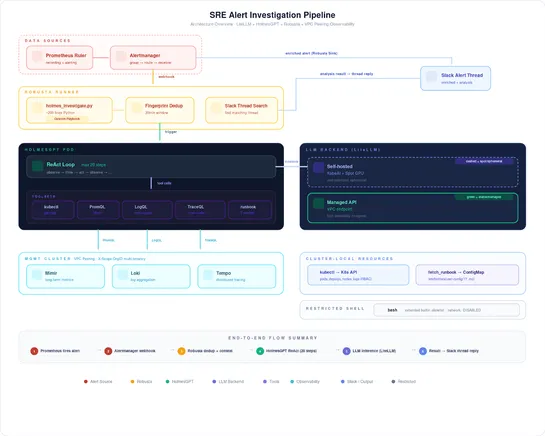

The compaction pipeline at Mixpanel ran into memory estimation issues causing OOMKills. By implementing AI-assisted analysis, they were able to reduce median estimation errorby 99%, leading to a significant improvement in memory estimation accuracy. Through thorough analysis and exploration of alter.. read more