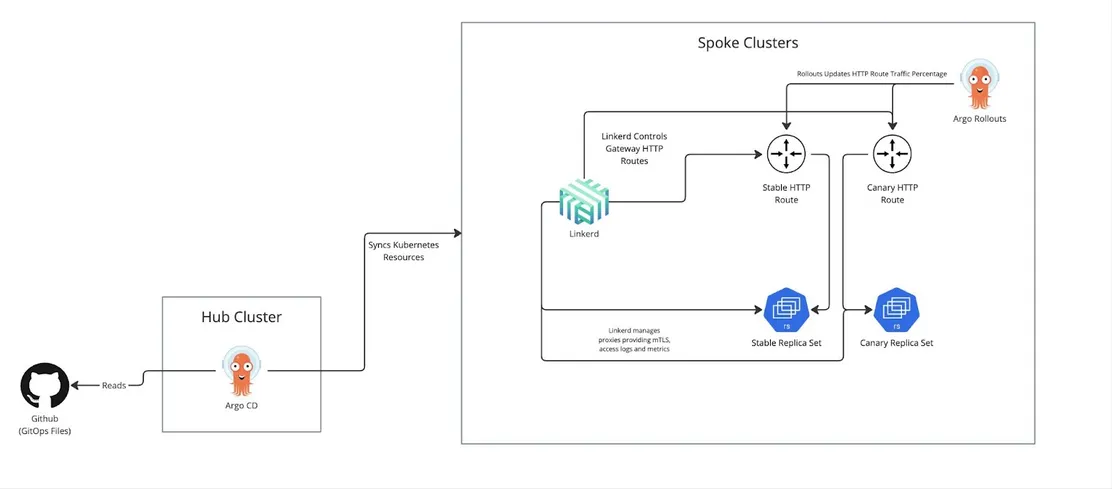

Imagine Learning tore down its old platform and rebuilt it on Linkerd with AWS EKS, layering in Argo CD and Argo Rollouts. The result? GitOps deploys, canary releases via the Gateway API, and mTLS baked in from the start.

The payoff:

Over 80% cut in compute costs.

97% fewer service mesh CVEs.

20% drop in ops overhead.

System shift: This isn't just a tech upgrade. It's a clear bet on lightweight, GitOps-native meshes built for secure, scalable, multi-cluster Kubernetes.

Give a Pawfive to this post!

Start writing about what excites you in tech — connect with developers, grow your voice, and get rewarded.

Join other developers and claim your FAUN.dev() account now!