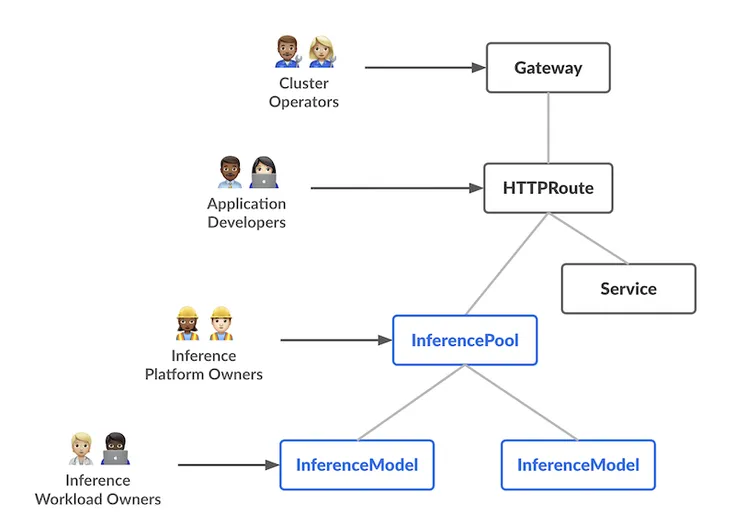

Gateway API Inference Extension takes AI workload routing on Kubernetes and infuses it with model-savvy powers. It slices latency on GPU clusters like a samurai. Meanwhile, the Endpoint Selection Extension acts like a traffic cop on caffeine, using live metrics to steer pods and trim those nagging tail latencies during traffic surges.

Give a Pawfive to this post!

Start writing about what excites you in tech — connect with developers, grow your voice, and get rewarded.

Join other developers and claim your FAUN.dev() account now!