TL;DR

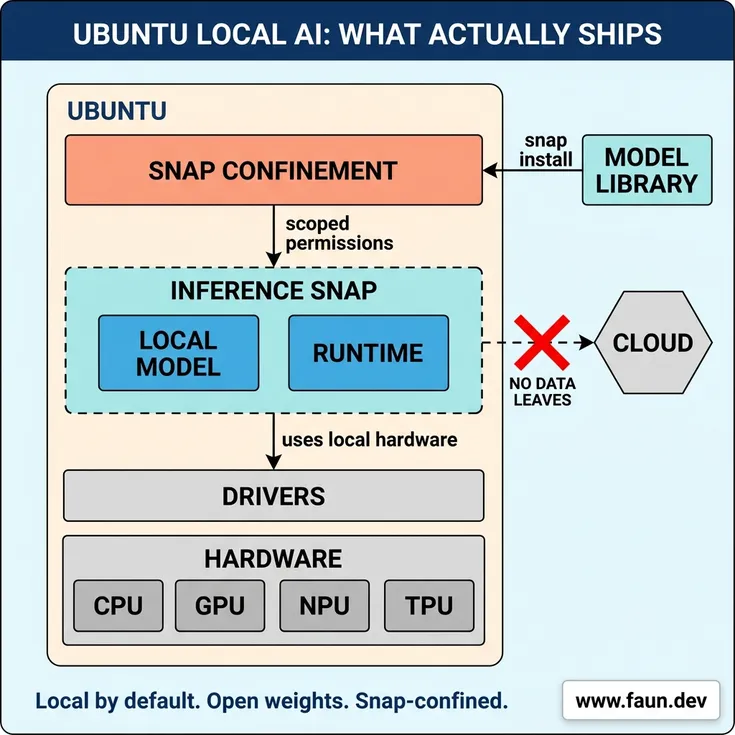

Ubuntu is getting local AI as a native capability over the next year, with inference snaps that install models like any other package, AI-powered accessibility features, and confined agentic workflows for both desktops and server fleets. Canonical is betting on open weight models, local-by-default inference, and snap confinement, a deliberate counter to the cloud-first AI direction Microsoft, Apple, and Google are taking with their operating systems.

Key Points

Highlight key points with color coding based on sentiment (positive, neutral, negative).Ubuntu is integrating local AI capabilities, focusing on local inference and AI-enhanced accessibility, which will be installed like any other package, enhancing both desktop and server environments.

The operating system will feature implicit AI, which improves existing functionalities like speech-to-text, and explicit AI, which introduces new agentic workflows for tasks such as document authoring and automated troubleshooting.

Local inference will be optimized for specific hardware through partnerships with silicon vendors, ensuring models are confined and secure, without indiscriminate access to user data.

Agentic workflows aim to simplify user interactions with Linux by enabling tasks like troubleshooting and system configuration through natural language, potentially transforming user experience on both desktops and servers.

Ubuntu's strategy emphasizes local control over cloud dependency, betting on advancements in consumer hardware and open weight models to maintain competitiveness and user preference for local solutions.

Ubuntu is heading somewhere genuinely different from the rest of the desktop and server OS market. Over the next year, the distribution will gain local inference as a first-class capability, AI-enhanced accessibility features, and confined agentic workflows that span both the desktop and the server fleet. The direction was laid out by Canonical's VP of Engineering Jon Seager in a post on Ubuntu Discourse on April 27, 2026, but the substance is what Ubuntu users, sysadmins, and SREs should actually care about: the OS is getting more capable, and it's doing it on its own terms.

Local inference, installed like any other package

The most concrete shift is that inference is becoming a native Ubuntu capability. Instead of stitching together Ollama, Hugging Face downloads, and quantization choices, users will install models the same way they install anything else:

snap install nemotron-3-nano

Inference snaps deliver bits optimized for the user's specific silicon when the vendor has contributed them, courtesy of Canonical's silicon partnerships. They are subject to the same confinement rules as every other snap, which means models cannot reach indiscriminately into the host or user data. The capability is already shipping, and Canonical is ramping up teams to keep pace with new model releases and expand optimized variants across more silicon platforms.

Recent open weight releases like Gemma 4 and Qwen-3.6-35B-A3B are proof that this approach works. Both demonstrate advanced tool-calling, which means they can search the web, hit external APIs, touch the filesystem, troubleshoot live systems, and reason about topics outside their training data, all from a model running on the user's machine.

Two kinds of AI features, and only one will be visible

Ubuntu will gain AI features in two distinct ways, and the distinction matters for users.

Implicit AI enhances things Ubuntu already does without changing how users think about the system. The flagship example is first-class speech-to-text and text-to-speech, framed as accessibility features rather than AI features. Users get a meaningfully better experience and don't need to learn new mental models. Most of this can run locally on open weight models with minimal drawbacks.

Explicit AI is the more visible surface: agentic workflows for authoring documents and applications, automated troubleshooting, and personal automation tasks like targeted daily news briefings. These ship as opt-in capabilities for users who want them, with the security and confinement controls Ubuntu users expect from snap-packaged software.

Implicit AI will improve what Ubuntu already does. Explicit AI will be introduced as a new feature. Neither will be forced on anyone.

The agentic future, on desktop and server

The more interesting bet is what happens when agents get integrated into Ubuntu's system layer.

On the desktop, the goal is to make the full power of Linux accessible to users who never learned the underlying primitives. Imagine asking your machine to troubleshoot a Wi-Fi connection or to stand up a pre-configured, TLS-secured open source software forge. The Linux desktop's historical fragmentation has produced excellent software but a frustrating integration experience for newcomers. Thoughtfully deployed LLMs could close that gap, with the same agent reachable from a mobile app, text messaging, or voice.

The server side is where this gets strategically interesting. For SREs running Ubuntu fleets, agents could interpret logs during incidents to accelerate root cause analysis or execute scheduled maintenance with strict guardrails. The argument is that delegating SRE work to an agent does not introduce a new class of risk, because well-run production environments already enforce access controls, audit trails, and separation between observation and action. Ubuntu's job is to expose the right primitives, including read-only analysis, tightly scoped action permissions, and full auditability, so agents inherit existing operational boundaries rather than bypass them.

Snap confinement and the consolidation of core system functions are the foundation that make this safe to attempt. Most distributions don't have that foundation in place, which is part of why Ubuntu is positioned to move on this.

Open weights, with eyes open

Ubuntu will favor open-weight models with license terms compatible with its values, paired with open source harnesses. The phrasing is deliberate. Access to model weights is meaningful, but it is not equivalent to the transparency the open source community is used to, and Canonical is not pretending otherwise. Model selection will weigh the full terms of the license, not just whether weights are downloadable.

This matters because the open weight ecosystem is still working out what "open" actually means for AI. Ubuntu picking models on license terms rather than marketing labels gives the broader community a reference point, and it gives users a credible signal about what they're running.

Hardware reality

Capable local inference is gated by capable hardware, and smaller parameter models still cannot match the largest cloud models on every task. The gap is closing. Silicon vendors are shipping consumer hardware with steadily improving inference performance, and native accelerators reduce power draw alongside compute gains. Ubuntu's silicon partnerships and enablement work are designed to make sure the OS is ready when commodity hardware catches up.

For now, users on older or underpowered machines will see less benefit from the local-first approach. That is the honest tradeoff for not sending data to someone else's cloud.

What this means for Ubuntu users

For desktop users, the near-term wins are accessibility upgrades and a much simpler path to running local models. For developers, inference snaps remove the friction of the current local-LLM tooling stack. For SREs and platform teams, the agentic primitives Ubuntu is building toward could change how fleets get managed, with audit trails and scoped permissions baked into the OS rather than bolted on.

For everyone else, the most important detail is what Ubuntu is not doing. There is no mandatory AI assistant. There is no telemetry-driven cloud companion. AI features will land when they're mature enough to ship, with a default bias toward local inference, and the OS itself stays recognizably Ubuntu.

The bet

Ubuntu's direction is a wager on three things: that consumer hardware will keep getting better at inference, that open weight models will stay competitive with closed ones, and that a meaningful slice of users and enterprises will prefer local control over cloud convenience. If those hold, Ubuntu has a genuinely differentiated position in a market where Microsoft, Apple, and Google are pulling their operating systems toward vendor-controlled cloud AI.

If they don't hold, Canonical will need to revisit the local-by-default stance. But the architectural choices being made now, including snap confinement, silicon partnerships, and consolidated system functions, are the ones that keep that option open while most of the industry locks itself into cloud dependencies.

Ubuntu is becoming an operating system that quietly gets more capable, while letting users keep their data and their choices.

Stakeholder Relationships

An interactive diagram mapping entities directly or indirectly involved in this news. Drag nodes to rearrange them and see relationship details.People

Key entities and stakeholders, categorized for clarity: people, organizations, tools, events, regulatory bodies, and industries.Leads Ubuntu engineering and is directing the distribution's AI integration strategy.

Organizations

Key entities and stakeholders, categorized for clarity: people, organizations, tools, events, regulatory bodies, and industries.Driving Ubuntu's shift toward local AI inference, open weight models, and confined agentic workflows, in deliberate contrast to the cloud-first AI direction of Microsoft, Apple, and Google.

Tools

Key entities and stakeholders, categorized for clarity: people, organizations, tools, events, regulatory bodies, and industries.Gaining native local AI inference, AI-powered accessibility features, and opt-in agentic workflows for desktop users and SRE fleets throughout 2026.

The confinement and packaging foundation that makes Ubuntu's local AI strategy viable, ensuring models and agents cannot access the host or user data indiscriminately.

Single-command installation of hardware-optimized AI models, replacing the friction of assembling local LLM tooling manually and giving Ubuntu users vendor-tuned inference out of the box.

Timeline of Events

Timeline of key events and milestones.Jon Seager publishes a post on jnsgr.uk laying out the technical foundation of inference snaps, later presented at an AI Native Dev meetup.

Canonical starts incentivizing engineering teams to each go deep on different AI tools and stacks, with a six-month learning window before consolidating findings.

Canonical's VP of Engineering Jon Seager publishes the official direction on Ubuntu Discourse: local-by-default inference, open weight models, snap confinement, no mandatory AI assistant.

Implicit AI features (accessibility upgrades like speech-to-text and text-to-speech) and explicit AI features (opt-in agentic workflows for desktop and SRE use cases) ship progressively as they reach quality bars.

Canonical ramps up teams to keep pace with new open weight model releases and expand hardware-optimized variants across more silicon platforms.

Six months after the deliberate adoption push begins, Canonical expects organizational learnings from the diverse team-by-team experimentation to crystallize into firmer practice.

Additional Resources

Enjoyed it?

Get weekly updates delivered straight to your inbox, it only takes 3 seconds!Subscribe to our weekly newsletter DevOpsLinks to receive similar updates for free!

Give a Pawfive to this post!

Start writing about what excites you in tech — connect with developers, grow your voice, and get rewarded.

Join other developers and claim your FAUN.dev() account now!

FAUN.dev()

FAUN.dev() is a developer-first platform built with a simple goal: help engineers stay sharp withou…

Dolly #DevOps

FAUN.dev()

@devopslinksDeveloper Influence

13

Influence

1

Total Hits

184

Posts