Ubuntu's Next Chapter: Local AI, Confined Agents, and a Bet Against the Cloud-First OS

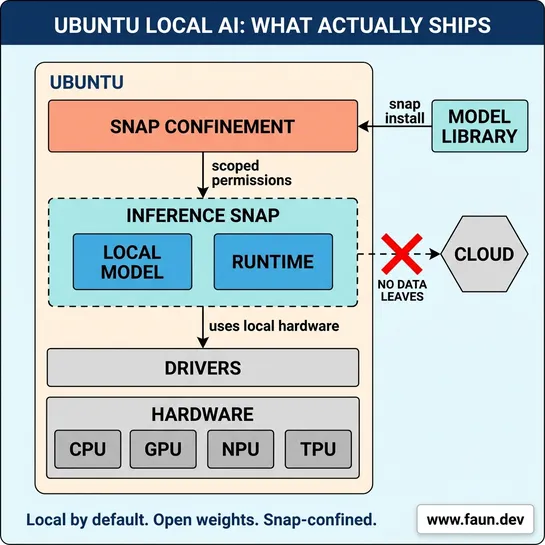

Ubuntu is getting local AI as a native capability over the next year, with inference snaps that install models like any other package, AI-powered accessibility features, and confined agentic workflows for both desktops and server fleets. Canonical is betting on open weight models, local-by-default inference, and snap confinement, a deliberate counter to the cloud-first AI direction Microsoft, Apple, and Google are taking with their operating systems.