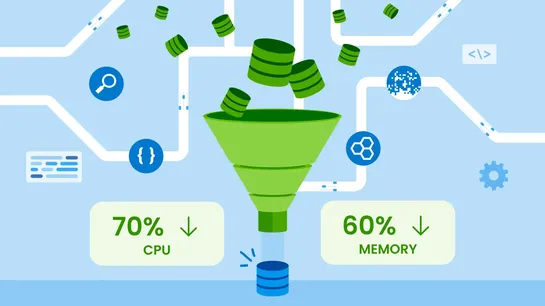

How We Saved 70% of CPU and 60% of Memory in Refinery’s Go Code, No Rust Required.

Refinery 3.0 cuts CPU by 70% and slashes RAM by 60%. The trick: selective field extraction from serialized spans. No full deserialization. Fewer heap allocations. Way less waste. It also recycles buffers, handles metrics smarter, and is gearing up to parallelize its core decision loop... read more