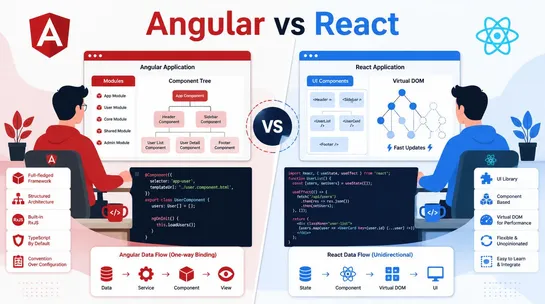

Angular vs React: Which Framework Is Better for Web Development?

Angular vs React: discover the main differences, performance, and use cases to choose the best framework for modern web development projects in 2026.

Join us

Angular vs React: discover the main differences, performance, and use cases to choose the best framework for modern web development projects in 2026.

Hey, sign up or sign in to add a reaction to my post.

You know the drill:build a product roadmap in Jira, create your product backlog, review it, update the user stories, come up with a sprint goal before the meeting, and finally, review every story to decide which ones need to be completed this sprint. Easier said than done, right? Well-planned sprint..

Hey, sign up or sign in to add a reaction to my post.

Hey there! 👋

I created FAUN.dev(), an effortless, straightforward way for busy developers to keep up with the technologies they love 🚀