Scaling Autonomous Site Reliability Engineering: Architecture, Orchestration, and Validation for a 90,000+ Server Fle

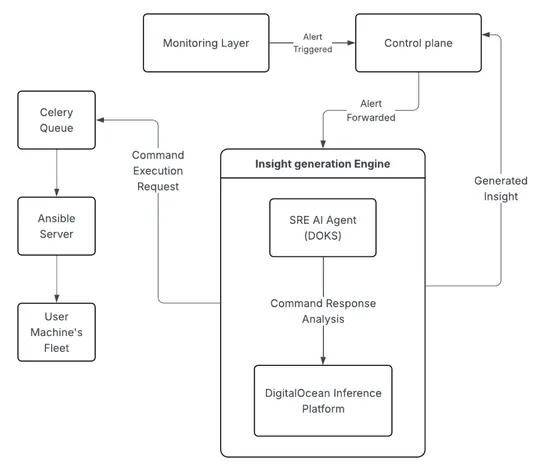

Cloudways scaled from a bootstrapped startup to a leading managed PHP hosting service, encountering challenges with growing support load. Early on, Cloudways recognized the opportunity to implement an AI-based SRE agent to reduce the burden on support teams and provide faster diagnosis and resolutio.. read more