Demystifying : Why You Shouldn’t Fear Observability in Traditional Environments

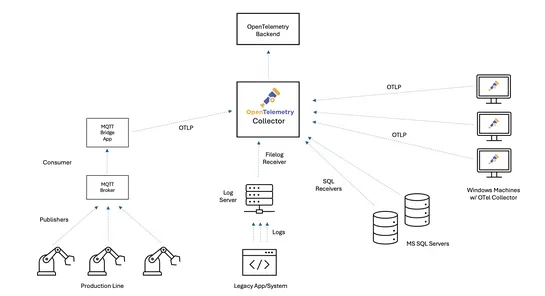

OpenTelemetry is friendly with the past. It now pipesreal-time observability into legacy systems- no code rewrite, no drama. Pull structured metrics straight from raw logs, Windows PDH counters, or SQL Server stats. It doesn’t stop there. Got MQTT-based IoT gear? OTLP export or lightweight adapters .. read more